I have worked with Terraform for a few years, from entirely open demo environments to customers with strict security and compliance requirements. I haven’t had the same opportunity to work with Pulumi in strictly regulated environments, but I know Pulumi has grown by leaps and bounds while I focused on Terraform. I’m curious to look at Pulumi through the lens of what I’ve come to learn about working on Terraform with teams in a regulated environment.

Changing infrastructure, whether with Pulumi or Terraform, carries risk. I’m familiar with the tools and techniques for mitigating these risks with Terraform. I’d like to explore how to do the same with Pulumi.

How does Pulumi work?

When explaining Terraform, I describe a development cycle with these steps: coding, planning, reviewing, approving, and applying. Pulumi and Terraform solve many of the same problems and work in similar ways. Pulumi is a desired state engine. The engine tracks the current state in a file and compares the current state to the desired state expressed by the Pulumi program. After this comparison, Pulumi uses the differences to create a plan to update the infrastructure to match the desired configuration. Once this plan executes, Pulumi records the new state in the state file.

Pulumi differs from Terraform by allowing developers to define infrastructure configurations using the programming languages and tools they already know. Out of the box, Pulumi provides language runtimes for node.js (including TypeScript), python, .net core, and go. Under the hood, the language runtime communicates all the desired resources to a deployment engine. The deployment engine works to compute the needed infrastructure changes. This processing is transparent to the developer, and the developer workflow is simple:

- Write a Pulumi program in their language of choice. (Pulumi can also generate a starter project for you.)

- Execute

pulumi stack initto create a stack to track deployment state. - Run

pulumi upto create or modify the deployed infrastructure.

Following this simple workflow, a developer can provision cloud infrastructure in minutes. Pulumi’s up command is an all-in-one create or update operation similar to apply in Terraform. With no additional arguments, pulumi up will:

- Run the current Pulumi program (building it if the language requires).

- Compute the new target infrastructure state.

- Create a plan consisting of creates, updates, or deletes to reach the target state.

- Display a preview of the proposed updates and prompt the operator for permission to make changes.

- Perform the updates once the operator provides approval.

Making changes to infrastructure is a serious business, and we want to make it less risky. Mistakes can lead to outages, security breaches, or data loss–which in turn can lead to unemployment. The simple workflow is safe because Pulumi relies on the operator’s judgment to determine if the proposed changes are acceptable to deploy. The interactive flow is a double edge sword. The downside is that an operator must be present at the console. This requirement is also the upside. By requiring the operator at the console, a human mind is always available to evaluate the preview.

Unlike Terraform, Pulumi does not guarantee that the changes shown during the preview will be the exact changes applied to infrastructure. Nor can you save a plan to an artifact and run that plan later–a standard best practice with Terraform.

After the operator gives consent, Pulumi will refresh its view of the current infrastructure before making changes. Pulumi can catch any configuration drift that occurs while the operator is “thinking.”

In contrast, the Terraform workflow guarantees that “Terraform will perform exactly these actions.” This guarantee sounds good, but it means that if the infrastructure drifts before Terraform applies the plan, Terraform does not detect that drift. Eventually, Terraform will detect and correct the infrastructure drift—but not until the next iteration.

Pulumi will catch the drift the first time. This approach is functionally equivalent to running two iterations of Terraform’s plan and apply cycle. Both methods work. Both reinforce that the infrastructure code represents the desired state. Pulumi’s decision to refresh and replan before making changes is simply a slightly more aggressive approach to the problem compared to Terraform. Still, the lack of a saved plan can be uncomfortable for Terraform veterans used to an exact plan, strictly applied.

Running Pulumi in Azure DevOps Pipelines

Let’s start with a simple Pulumi program that we want to deploy with automation. This program deploys these resources:

- An Azure resource group

- A virtual network

- A subnet

- A public IP

- A network security group that allows SSH

- A network interface

- A virtual machine

The Pulumi documentation provides a starting point for running Pulumi in a DevOps pipeline. This sample uses build conditions to control whether Pulumi runs in preview mode or non-interactive update mode. The preview mode runs for manual builds and for pull request validation builds. When the pipeline executes for a continuous integration trigger, it will automatically update infrastructure using Pulumi.

See the full pipeline below:

name: 1.0.$(Rev:r)

trigger:

batch: true

branches:

include:

- main

paths:

include:

- 'pulumi-devops-pipeline/stack'

pr:

- main

- development

variables:

- group: pulumi-access-token

- name: azure-subscription

value: 'Azure - Personal'

- name: stack-name

value: 'jamesrcounts/AzureCSharp.DevOpsPipeline/azcs-devopspipeline-dev'

- name: working-directory

value: 'pulumi-devops-pipeline/stack'

pool:

vmImage: 'ubuntu-latest'

steps:

- checkout: self

fetchDepth: 1

- task: Pulumi@1

displayName: 'Preview Infrastructure Changes'

condition: or(eq(variables['Build.Reason'], 'PullRequest'), and(eq(variables['Build.Reason'], 'Manual'), ne(variables['manual-deploy'], true)))

inputs:

azureSubscription: $(azure-subscription)

command: 'preview'

args: '--diff --refresh --non-interactive'

cwd: $(working-directory)

stack: $(stack-name)

- task: Pulumi@1

displayName: 'Deploy Infrastructure Changes'

condition: or(or(eq(variables['Build.Reason'], 'IndividualCI'), eq(variables['Build.Reason'], 'BatchedCI')),and(eq(variables['Build.Reason'], 'Manual'),eq(variables['manual-deploy'], true)))

inputs:

azureSubscription: $(azure-subscription)

command: 'up'

args: '--yes --diff --refresh --non-interactive --skip-preview'

cwd: $(working-directory)

stack: $(stack-name)

I primarily want to talk about how this works as part of a developer workflow. Before that, here are a few notes about getting the pipeline running in Azure DevOps:

- Install the Pulumi Azure Pipelines Task extension in your Azure DevOps organization.

- Configure a pipeline to run in Azure DevOps.

- Create a DevOps project.

- Create a subscription scoped Azure service connection.

- The pipeline YAML references this name.

- I used

Azure - Personalas the name.

- Define a variable group to hold the Pulumi access token

- I named my variable group

pulumi-access-tokenand referenced it in the pipeline. - Create a secret variable named

pulumi.access.tokenin thepulumi-access-tokengroup.

- I named my variable group

- Create a pipeline

- Choose GitHub (YAML)

- Grant OAuth access if needed

- Choose your infrastructure repository from the list.

- Choose “Existing Azure Pipelines YAML file.”

- Pick your YAML file’s branch (if the pipeline is not on the default).

- Choose or enter the path to your YAML file.

- Choose Run (or Save) to save the pipeline.

- Grant permission to the pipeline to use the service connection

- Configure a branch protection rule in GitHub that requires all status checks to pass. Pull Request builds in Azure DevOps will automatically show up as status checks in GitHub.

Some of the above steps are Pulumi specific. Some are just everyday work needed to set up most Azure DevOps pipelines. The essential parts of this build pipeline are:

- The pipeline defines a batched continuous integration trigger for the main branch.

- We also define a pull request trigger, which activates for PRs to the main or development branches.

- A job step that runs

pulumi previewfor pull requests. - A job step that runs

pulumi upcontinuous integration builds.

With this pipeline, we can implement a branching strategy that controls infrastructure changes. We do not want deployments to change on every commit, but only after review. The first part of the development lifecycle for a team member working in a shared Pulumi repository might look like this:

- Create a topic branch for an infrastructure task.

- Open a pull request to the development branch when the coding is complete.

- This pull request triggers a validation build and generates a preview of the changes.

- Other developers on the team can review the code changes and the Pulumi preview side-by-side.

- Once enough team members approve of the changes, merge the pull request into development.

All changes should come into these branches via pull requests so the team can review changes alongside the infrastructure preview. We enforce this flow by preventing direct commits to the development and main branches. Azure DevOps implements the same feature with branch policies.

Since I am using GitHub as my source code repository, I used branch protection rules:

The development branch is an integration and staging area, so approving this the pull request should not trigger an infrastructure deployment. After successfully merging with the development branch, teammates should update their existing topic branches to match the new baseline. Deployments will originate from the main branch only. As we dig in a little further, we will see that I’ve added an exception to that rule, but we can stay in the typical release flow for now. The next steps in the release flow are:

- Open a pull request to the main branch when preparing to release.

- This pull request triggers a validation build and generates a preview of the changes.

- The team can review the Pulumi preview, which shows the proposed changes to infrastructure.

- Once enough team members approve of the changes, merge the pull request into main.

The merge to the main branch will run pulumi up with the --yes flag. The --yes flag suppresses the prompt for permission and automatically approves the plan. The implication for the team working with this pipeline is that a merge to main is equivalent to authorizing the release.

As an individual developer, merging and previewing twice before deployment feels like overkill. For a developer working alone, it is. But the two stages are beneficial to teams because it allows rollup of all pending changes before doing a release. In a Terraform pipeline, we accomplish the same rollup by creating releases and canceling older releases when creating a new one. In this Pulumi flow, “releases” queue up as commits on the development branch. When deciding to deploy, the team can choose the latest development head or any intervening commits since the last deploy. The second pull request to the main branch gives the team a final chance to review what infrastructure will change. Groups restricted to deploying during scheduled maintenance windows can delay the merge to main until an appropriate time.

There are times or environments where it makes sense to bypass all these safety checks and deploy changes directly. It might be okay to try experimental builds in a development environment, or a hotfix may need expedited deployment to production. In these cases, one can use the pulumi CLI directly if the infrastructure policies allow changes outside of pipelines. If all infrastructure changes must run through automation, we can use a manual deploy option. Usually, the pipeline conditions treat manually queued builds like pull request validation builds, and only pulumi preview runs. By setting the manual-deploy variable, we signal the pipeline to the pipeline to run pulumi up like a continuous integration run and deploy the changes.

Toward a Private Implementation

My first swag at hosting a Pulumi pipeline in Azure DevOps is simple, and it works. However, some organizations may have concerns with the mechanisms Pulumi uses to make things simple. I’m not saying that Pulumi does anything wrong. I’m just saying some people are paranoid (perhaps with good cause). To understand why paranoid people might object to my first Pulumi pipeline, we need to understand the Pulumi Service backend.

During the first pipeline iteration, I didn’t dig into how Pulumi state storage works. Pulumi uses remote state storage by default (contrast with Terraform, which uses a local file by default). External state storage enables multiple developers and systems to collaborate on the same code base. Without this feature, stateless build agents would never have access to the current state of infrastructure deployments. So, setting up remote storage becomes the first pre-requisite for automation. Because Pulumi uses remote storage by default, each new stack is ready for automation out of the box.

The Pulumi Service provides the default backend for state storage and encryption keys (used to protect sensitive configuration). My first pipeline leverages the Pulumi Service backend, and this is why I provided the pulumi.access.token variable in the first pipeline. The service is free for individuals who don’t need support or team RBAC features. These default settings make it easy to get started with collaboration and automation. Avoiding the headache of adequately configuring these systems is very valuable. As we will see, we can’t use Pulumi to bootstrap these systems, so we must drop down to more primitive scripts.

Some organizations will always object to paying anything and avoid the Pulumi Service paid tiers’ cost. There is value for money in paying for this service, and some organizations will see that. However, heavily regulated organizations with strict audit requirements often have policies that prevent using the Pulumi SaaS option. Right or wrong, getting approval to use SaaS at some organizations can be difficult.

For teams that need to block all internet-facing access to state storage, Pulumi offers two options. First, you can host the Pulumi Service yourself by purchasing an enterprise license. For a team just getting started, this might be a big ask—especially if the benefits of IaC have yet unknown in their organization. Fortunately, the Pulumi backends are modular and integrate with various cloud storage providers.

Since we are targeting Azure, our next iteration of the DevOps pipeline will leverage an Azure Storage Account as the state backend. The example repository contains a script to bootstrap a backend storage account with some basic security features. In their default configuration, Azure storage accounts include encryption at rest and require HTTPS. In addition to these defaults, the script configures a storage account with geo-redundancy, minimum TLS version requirements, soft-deletes, and disabled anonymous access.

The backend is still accessible from the internet (with the right credentials). It could be further secured using the storage account’s built-in firewall, or the internet can be blocked entirely using the Private Endpoint feature. The script doesn’t set up either type of protection, but in the real world, some customers insist on those features as well.

Once we create the storage account, we need to let Pulumi know that we want to use it. I made a new copy of the pipeline sample and began by getting Pulumi working interactively with my storage backend. Following the docs made this relatively straightforward. Take these steps in the Pulumi project folder:

- Set in an environment variable called

AZURE_STORAGE_ACCOUNTfor the storage account name.- The script created a storage account named

sapulumi0f50472c917b4584for me. - So export

AZURE_STORAGE_ACCOUNT=sapulumi0f50472c917b4584sets the expected value in my shell session.

- The script created a storage account named

- Login:

pulumi login --cloud-url azblob://<container>.- The container token is your blob container’s name inside the storage account, not the storage account name.

- The backend storage script I used created a container named

pulumifor me. - Therefore

pulumi login --cloud-url azblob://pulumiwas my exact command. - You should see a message like this, indicating a successful login.

vscode ~/workspaces/pulumi (dev) $ export AZURE_STORAG_ACCOUNT=sapulumi0f50472c917b4584

vscode ~/workspaces/pulumi (dev) $ pulumi login --cloud-url azblob://pulumi

Logged in to codespaces_456f36 as vscode (azblob://pulumi)

vscode ~/workspaces/pulumi (dev) $ I ran a pulumi preview to verify everything is working. But this fails because the storage account blocks anonymous access to the blob data. To get pulumi working, we need to provide either the storage account key or a shared access token. For this interactive test, I’ll just use the storage account key. This key is available in the Azure Portal. I copied it from there and used export AZURE_STORAGE_KEY=<key> to load it into my shell session.

This environment variable resolves the access error, and on the first run through Pulumi prompts you to create and configure your stack. First, it asked me to specify a stack name, and I entered azcs-privatebackend-dev as the name. Next, Pulumi asked for a passphrase to use for protecting configuration secrets. Like the storage backend, Pulumi lets you take control over the secrets provider. The Pulumi Service provides one, but since we aren’t using the service, the next default is the passphrase provider. Pulumi also supports more robust cloud key managers like Azure KeyVault.

For now, I’ll just go with the passphrase provider and provide a passphrase. Once I do that, Pulumi generates a preview. The pulumi up command works as expected but again prompts for the secret passphrase. The output helpfully hints that setting the PULUMI_CONFIG_PASSPHRASE environment variable can bypass this prompt. After pulumi up finishes, Azure has created our resources, and we have a stack file in our storage account.

The next challenge is updating our pipeline to use our private backend. Much of the yak-shaving steps are the same, but the pulumi-access-token variable library is not needed. Instead, I created a variable library called pulumi-azure-backend for the configuration required to set up Azure Storage as the self-managed backend. This library contains the following keys and values:

AZURE_STORAGE_ACCOUNT: the storage account name.AZURE_STORAGE_CONTAINER: the name of my blob container.- Pulumi doesn’t require this, but I needed this value in a couple of places in the scripts.

PULUMI_CONFIG_PASSPHRASE: the passphrase for my secret provider.AZURE_STORAGE_AUTH_MODE: login- This item is used by the Azure CLI when creating a SAS token and is not Pulumi specific.

With these values, we can leverage this pipeline:

name: 1.0.$(Rev:r)

trigger:

batch: true

branches:

include:

- main

paths:

include:

- 'pulumi-private-backend/stack'

pr:

- main

- development

variables:

- group: pulumi-azure-backend

- name: azure-subscription

value: 'Azure - Personal'

- name: stack-name

value: 'azcs-privatebackend-dev'

- name: working-directory

value: 'pulumi-private-backend/stack'

pool:

vmImage: 'ubuntu-latest'

steps:

- checkout: self

fetchDepth: 1

- task: AzureCLI@2

displayName: 'Generate Storage Key'

inputs:

azureSubscription: 'Azure - Personal'

scriptType: 'bash'

scriptLocation: 'scriptPath'

scriptPath: '$(working-directory)/scripts/generate-storage-keys.sh'

failOnStandardError: true

- task: AzureCLI@2

displayName: 'Environment Setup'

inputs:

azureSubscription: 'Azure - Personal'

scriptType: 'bash'

scriptLocation: 'scriptPath'

scriptPath: '$(working-directory)/scripts/environment-setup.sh'

addSpnToEnvironment: true

failOnStandardError: true

- task: Bash@3

displayName: 'Pulumi Run'

env:

AZURE_STORAGE_SAS_TOKEN: $(AZURE_STORAGE_TOKEN)

PULUMI_CONFIG_PASSPHRASE: $(PULUMI_CONFIG_PASSPHRASE)

ARM_CLIENT_ID: $(AZURE_CLIENT_ID)

ARM_CLIENT_SECRET: $(AZURE_CLIENT_SECRET)

ARM_SUBSCRIPTION_ID: $(AZURE_SUBSCRIPTION_ID)

ARM_TENANT_ID: $(AZURE_TENANT_ID)

inputs:

targetType: 'inline'

script: |

#!/usr/bin/env bash

set -e -x

# Download and install pulumi

curl -fsSL https://get.pulumi.com/ | bash

export PATH=$HOME/.pulumi/bin:$PATH

# Login into pulumi. This will require the AZURE_STORAGE_ACCOUNT

# environment variable.

pulumi login -c azblob://${AZURE_STORAGE_CONTAINER}

pulumi stack select $(stack-name)

COMMON_ARGS="--diff --refresh --non-interactive"

if [ "${BUILD_REASON}" = "PullRequest" ] || { [ "${BUILD_REASON}" = "Manual" ] && [ "${MANUAL_DEPLOY}" != "true" ]; }; then

pulumi preview ${COMMON_ARGS}

elif [ "${BUILD_REASON}" = "IndividualCI" ] || [ "${BUILD_REASON}" = "BatchedCI" ] || { [ "${BUILD_REASON}" = "Manual" ] && [ "${MANUAL_DEPLOY}" = "true" ]; }; then

pulumi up --yes --skip-preview ${COMMON_ARGS}

else

echo "##vso[task.logissue type=error]No run conditions matched."

exit 1

fi

workingDirectory: '$(working-directory)'

Our new pipeline has the following steps:

- Generate a shared access signature (also known as a SAS token) to grant access to the storage container instead of using the less secure storage account key.

- Use an Azure CLI task to inject Service Principal credentials into the pipeline process at runtime.

- A shell script to configure and run Pulumi.

The most obvious difference is that a Bash task replaces the Pulumi tasks. There is an open bug preventing us from using the Pulumi pipeline task. When the Pulumi task runs, it fails to read the process environment, so it never reads the SAS token or other secrets I tried to inject. Although the Pulumi task does have better integration with Service Connections in DevOps, we need to use the Bash task because it reads the process environment correctly. Luckily the Pulumi docs provide starter scripts for teams that want to take the “manual approach.” Starting with those scripts, I adapted until I had a viable replacement for the built-in tasks.

The script does the following:

- Downloads the latest Pulumi CLI and places it before any others on the path.

- Performs

pulumi loginjust as I did while working interactively. - Uses

pulumi stack selectto choose the stack within the storage account.- I only have one stack at this point, but there could be more.

- Evaluate the same PR vs. CI vs. Manual conditions as the Pulumi tasks did before. Identical scenarios are supported.

- PRs and Manual trigger only a

pulumi previewrun.

- CI builds and Manual builds with a particular variable set will cause

pulumi up --yesto run.

- Finally, if none of these cases match, the script triggers a build failure.

- PRs and Manual trigger only a

It is essential to take note of the environment block on the Bash task. Each property in this block is an Azure DevOps pipeline secret. Secrets are only passed to the shell when referenced in this way. Otherwise, they remain encrypted and inaccessible to the bash process. We use the environment block to provide our Service Principal credentials, our SAS Token, and our secret passphrase.

We get these secrets using a pair of scripts. The environment setup script is an Azure CLI task that simply injects its Service Connection credentials into the pipeline as secrets:

#!/usr/bin/env bash

set -euo pipefail

echo "##vso[task.setvariable variable=AZURE_CLIENT_ID;issecret=true]${servicePrincipalId}"

echo "##vso[task.setvariable variable=AZURE_CLIENT_SECRET;issecret=true]${servicePrincipalKey}"

echo "##vso[task.setvariable variable=AZURE_SUBSCRIPTION_ID;issecret=true]$(az account show --query 'id' -o tsv)"

echo "##vso[task.setvariable variable=AZURE_TENANT_ID;issecret=true]${tenantId}"

This script works because the pipeline sets the addSpnToEnvironment property to true on the task that runs the script. The script to generate the storage key is also an Azure CLI task:

#!/usr/bin/env bash

# To ensure the az CLI is logged in and out properly, run this task inside an

# Azure CLI DevOps task.

set -euo pipefail

# Calculate the token expiration time

TOKEN_EXPIRATION=$(date -u -d "1 hour" '+%Y-%m-%dT%H:%MZ')

# Generate a read-write SAS token for the private backend storage container

TOKEN=$(

az storage container generate-sas \

--account-name ${AZURE_STORAGE_ACCOUNT} \

--name ${AZURE_STORAGE_CONTAINER} \

--expiry ${TOKEN_EXPIRATION} \

--permissions acdlrw \

--https-only \

--as-user \

--output tsv

)

# Set the token as a pipeline variable for other steps to use.

echo "##vso[task.setvariable variable=AZURE_STORAGE_TOKEN;issecret=true]${TOKEN}"

This task generates a SAS token (with all available permissions) that expires one hour from creation. I experimented with a few different permission settings but did not identify the smallest required set! So, with further experimentation, I might narrow down this permission scope. More importantly, this SAS token script would not work until I added the Storage Blob Data Contributor role to my Azure DevOps service principal. The Contributor role permission on the subscription was not sufficient. The internet mentions this requirement here and there, but it took me a while to internalize what I needed to do.

Once I overcame that issue, I found that pulumi preview worked in my pipeline. From there, I iterated on the script until I had what you see above.

The Big Picture

Pulumi is very easy to get started with, but I still have more questions. I want to explore using Azure KeyVault as my secret provider. Besides that, as someone coming from Terraform, the most challenging concept to learn to accept was the idea that there is no saved plan. This difference forced me to think about alternative ways to manage previews and releases.

Pulumi ultimately expects you, the operator, to act as the final safety check. Using branch policies to enforce pull request validation builds is one way to provide the opportunity for review. It forces us into a branching strategy that we might not otherwise choose. And while that introduces a constraint, Pulumi provides some fantastic tools in return. With Pulumi, we can leverage powerful languages like C# and TypeScript. These mature languages can do things that HCL and Terraform simply do not.

You may still feel more comfortable with the saved plan that Terraform provides. While there is some discussion on the Pulumi issue tracker about adding this feature, I would not say it is a make or break requirement. Whether you choose Terraform or Pulumi, using an Infrastructure as Code tool mitigates many human error sources in traditional infrastructure management techniques. With the right checks and reviews, you can safely reap the benefits of a robust management system like Pulumi.

Original Article Source: Beginner’s Guide to Pulumi CI/CD Pipelines written by Jim Counts (If you're reading this somewhere other than Build5Nines.com, it was republished without permission.)

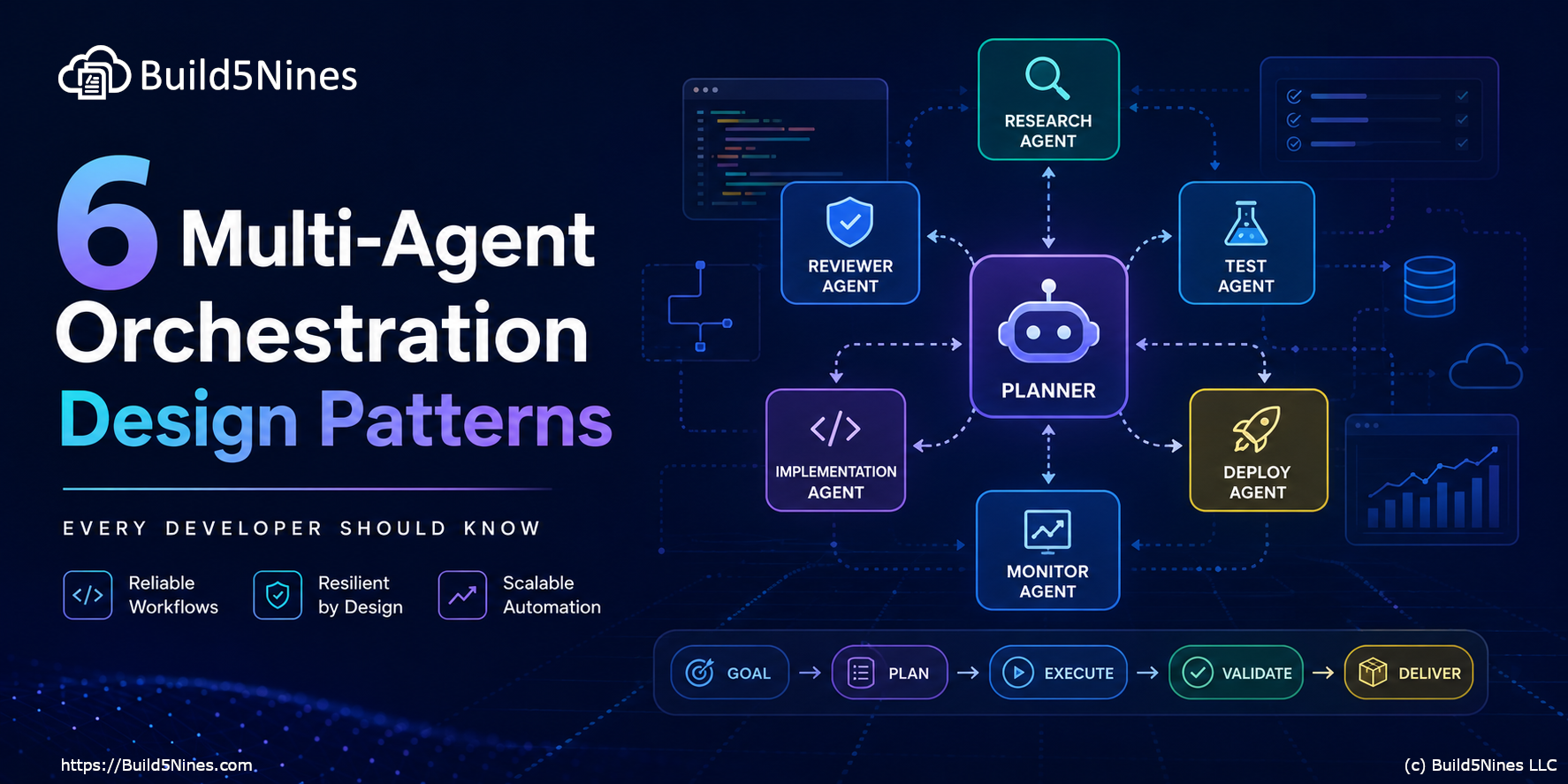

6 Multi-Agent Orchestration Design Patterns Every Developer Should Know

6 Multi-Agent Orchestration Design Patterns Every Developer Should Know

The Software Dark Factory and the Future of Software Development

The Software Dark Factory and the Future of Software Development

Run Gemma 4 Locally with GitHub Copilot and VS Code

Run Gemma 4 Locally with GitHub Copilot and VS Code

Microsoft Azure Regions: Interactive Map of Global Datacenters

Microsoft Azure Regions: Interactive Map of Global Datacenters

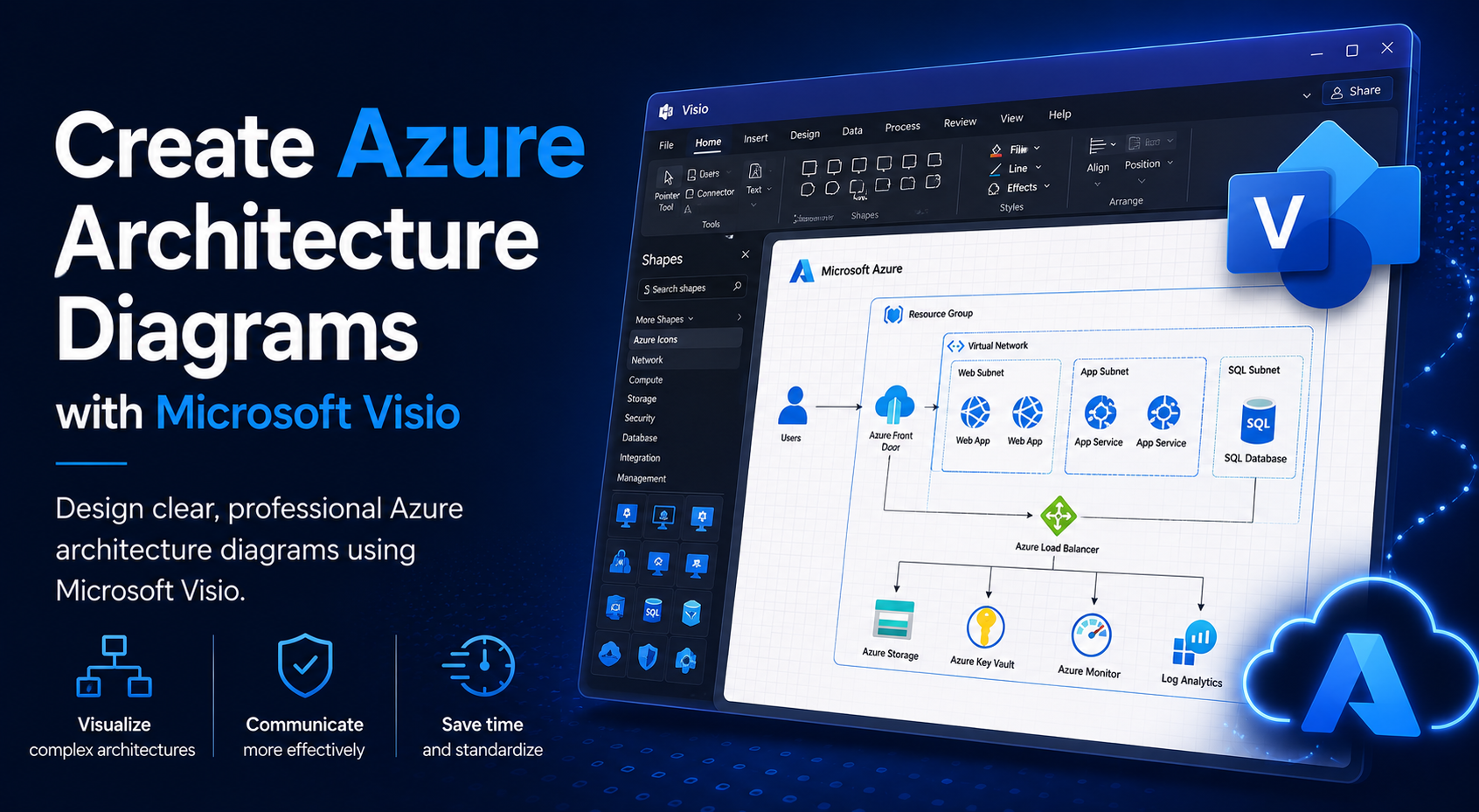

Create Azure Architecture Diagrams with Microsoft Visio

Create Azure Architecture Diagrams with Microsoft Visio